My name is Dan Wang, and I am an incoming Assistant Professor at the University of Arizona. I am currently a Postdoc at the University of California San Diego, where I am advised by Professor Ravi Ramamoorthi. Previously, I worked at the University of Copenhagen in association with the AI Pioneer Centre, where I was advised by Professor Serge Belongie. I received my Ph.D. degree in Electrical and Computer Engineering at the University of British Columbia, where I was supervised by Professor Z. Jane Wang and Professor Tim Salcudean. I obtained my Master's and Bachelor's degrees from Beihang University.

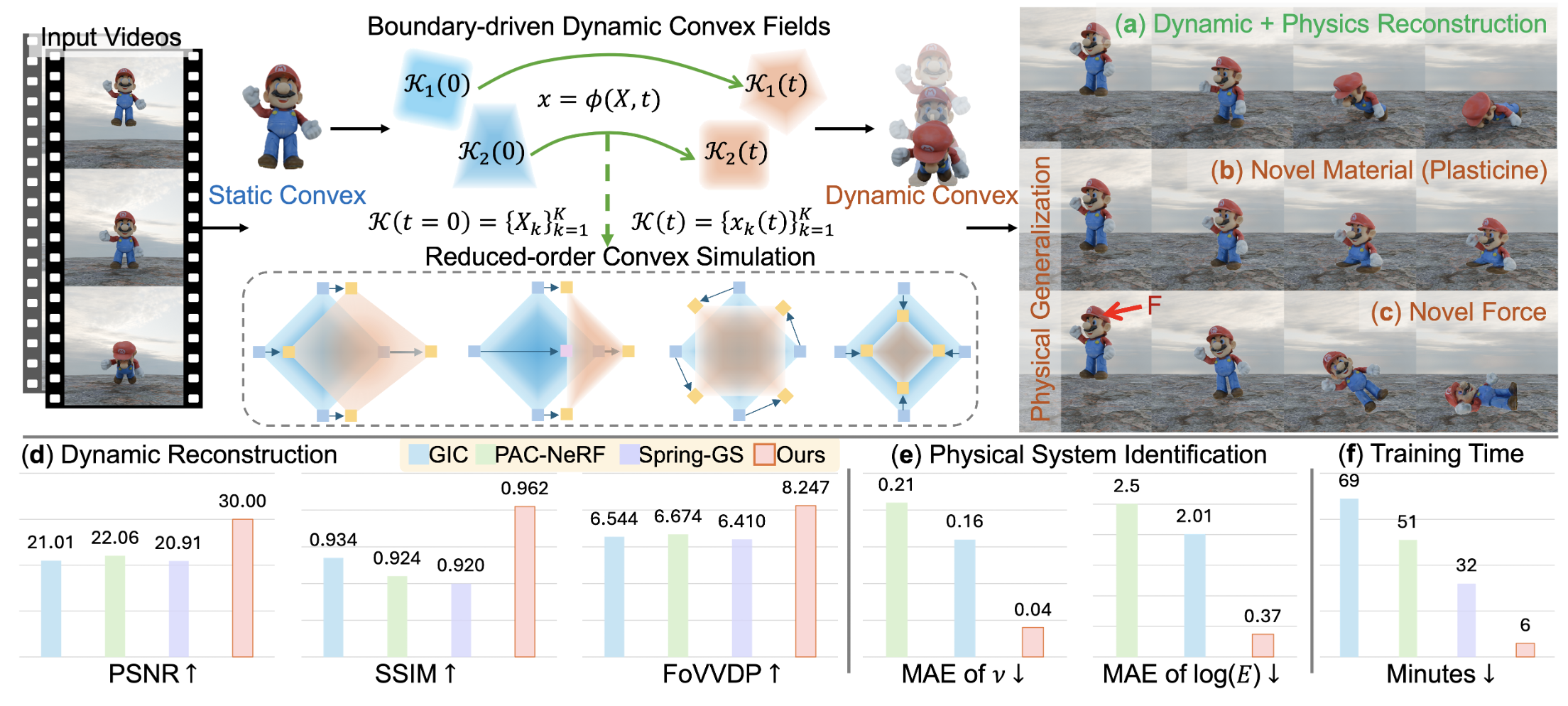

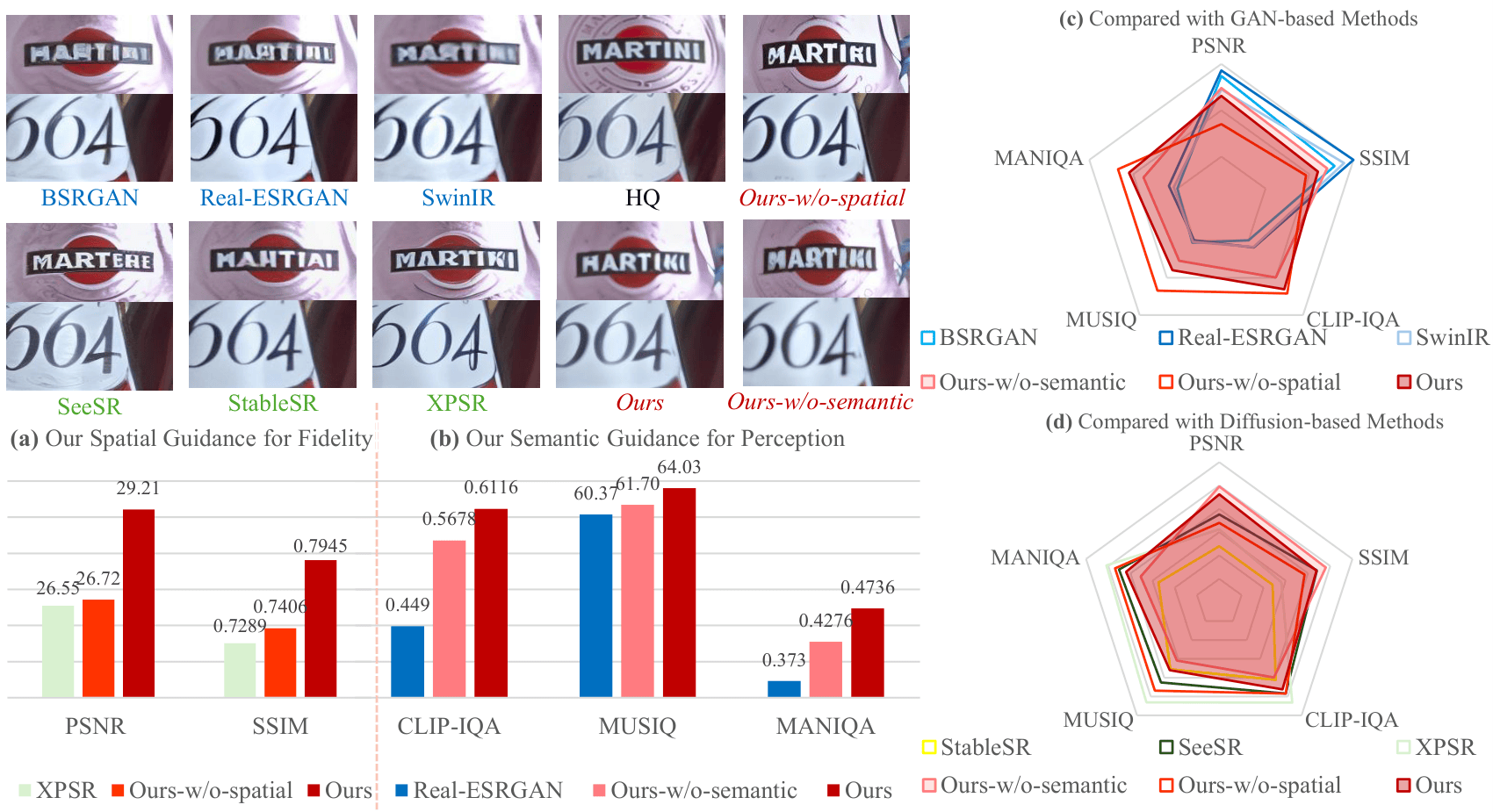

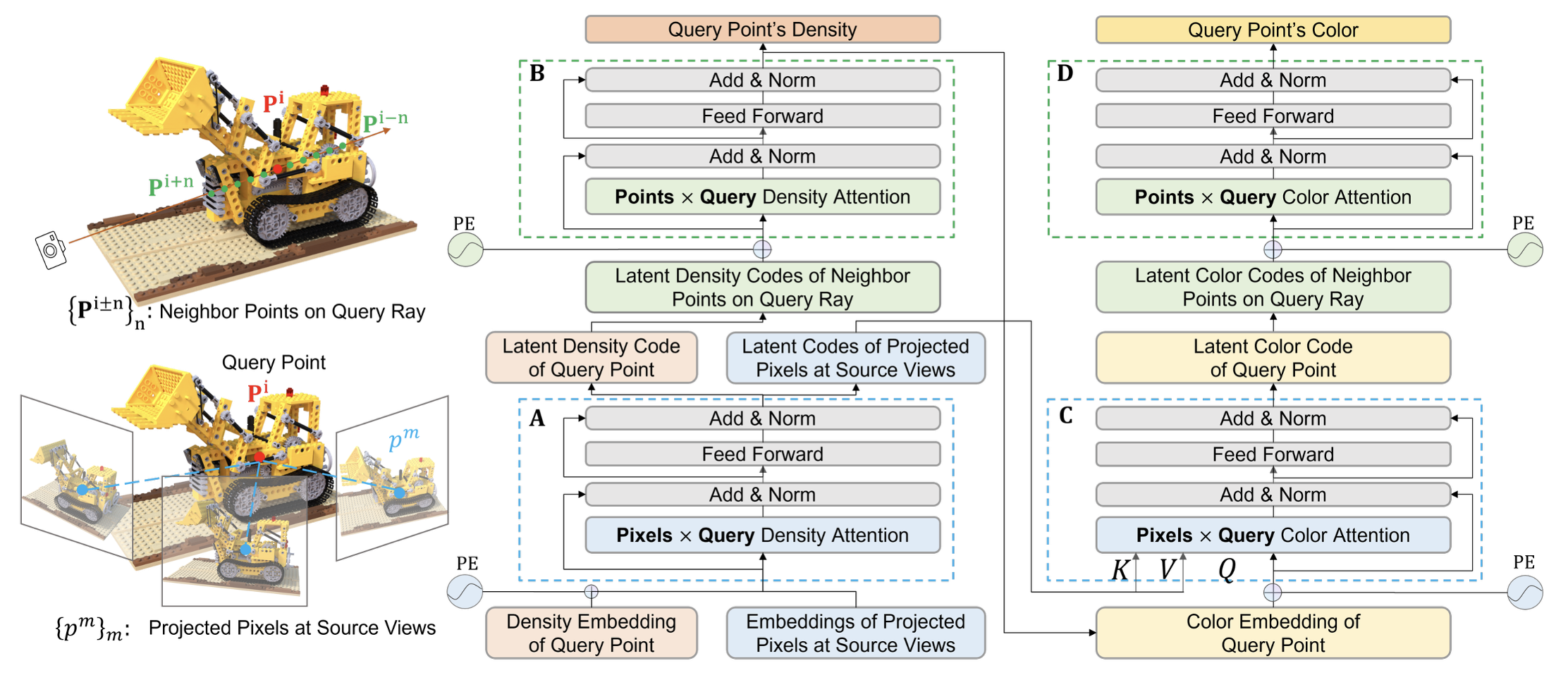

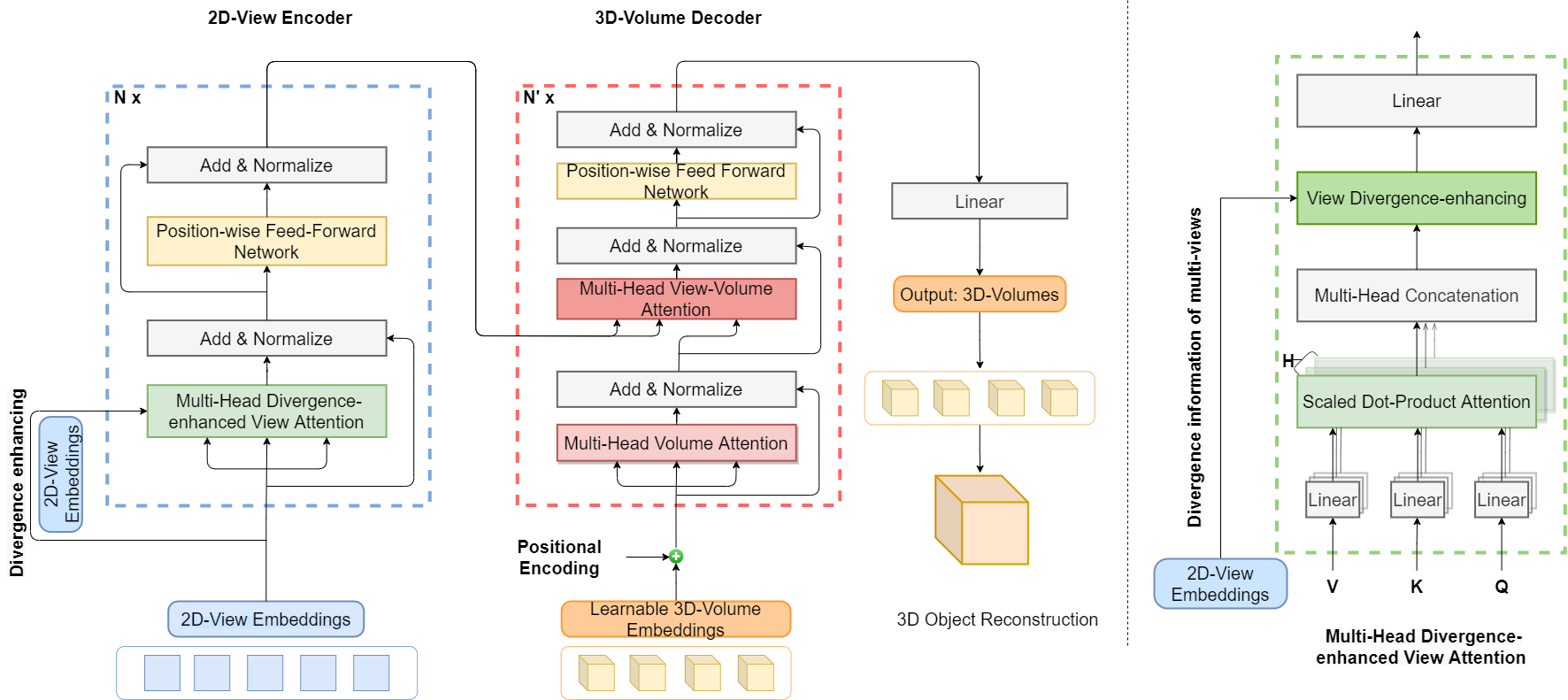

My research lies at the intersection of Computer Graphics, Computer Vision, and Artificial Intelligence, with the overarching goal of advancing AI systems that seamlessly integrate environmental understanding with adaptive interaction to enhance human life. I focus on developing next-generation 3D reconstruction and rendering methods for human characters and complex dynamic scenes. By unifying classical graphics principles with modern deep learning approaches, my work aims to build systems that are not only high-performing, but also interpretable and physically grounded.

🚀 Ph.D. Openings Available

I am actively recruiting motivated Ph.D. students to join my research group, with openings expected as early as Spring 2027 or Fall 2027. Please apply through the official Ph.D. application process at the University of Arizona and list my name in your application. You are also welcome to email me your CV/resume, transcripts, and a brief description of your research interests.